TADM 2021

First International Workshop on Trusted Automated Decision-Making

An ETAPS 2021 Workshop

Workshop Goals

When can automated decision-making systems or processes be trusted? Is it sufficient if all decisions are explainable, secure, and fair? As more and more life-defining decisions are being relegated to algorithms based on Machine Learning (ML), it is increasingly becoming clear that the touted benefits of introducing new and novel algorithms, especially those based on Artificial Intelligence (AI), into our daily lives are accompanied by serious negative societal consequences. Corporations are incentivized to promote opacity rather than transparency of their decision-making processes, due to the proprietary nature of their algorithms. What disciplines can help software professionals demonstrate trust in automated decision-making systems?

Decision-making logic of black box approaches -- such as those based on deep learning or deep neural networks -- cannot be comprehended by humans. The field of "explainable AI," which prescribes the use of adjunct explainable models, partially mitigates this problem. Do adjunct models make the whole process trustworthy enough? Detractors of explainable AI propose that decisions that could potentially impact human safety be restricted to interpretable and transparent algorithms. Although there have been a few recent successes in the creation of interpretable models -- including decision trees and case-based reasoning approaches -- it is not clear whether they are sufficiently accurate or practical.

SATURDAY March 27, 2021

13:00 CET - 18:20 CET (GMT+1)

| Time | Event |

| 13:00 - 13:10 CET | Welcome Ramesh B. |

| 13:10 - 14:10 CET | Keynote Micheal I. Jordan: The Decision-Making Side of Machine Learning: Computational, Inferential and Economic Perspectives [Luis Alexandre - Session Chair] |

| 14:10 - 14:25 CET | Short Break - 10 minutes |

| Invited Talks | |

| 14:25 - 14:55 CET | Interpretability and Interactivity in Machine Learning Through Differentiable Decision Trees - Andrew Silva [Ramesh - Session Chair] |

| 14:55 - 15:25 CET | Function and User-Satisfaction in Explainable AI. by Kathleen Anne Creel [Ramesh - Session Chair] |

| 15:25 - 16:15 CET | Long Break - 50 minutes |

| Accepted Papers | |

| 16:15 - 16:35 CET | A Measure of Explanatory Effectiveness by Dylan Cope and Peter McBurney [Raj Dasgupta - Session Chair] |

| 16:35 - 16:55 CET | Longitudinal Auto Causal Discovery Can Help Increase Trust in Complex Decisions by Vivek Nallur [Raj Dasgupta - Session Chair] |

| 16:55 - 17:10 CET | Hotwash / Q & A |

| 17:10 - 17:20 CET | Short Break - 10 minutes |

| 17:20 - 18:15 CET | Transdisciplinary & Trustworthy Panel Dylan Hadfield-Menell, Mark Ryan, Alka Patel [Ilya Parker - Session Chair] |

| 18:15 - 18:20 CET | Closing Remarks Ramesh Bharadwaj |

SUNDAY March 28, 2021

13:00 CEST - 18:20 CEST (GMT+2) (EU Standard Time Ends March 28 01:00 GMT)

| Time | Event |

| 13:00 - 13:10 CEST | Welcome from Madhavan Mukund |

| 13:10 - 14:10 CEST | Keynote - Cynthia Rudin: Interpretable Neural Networks for Computer Vision: Clinical Decisions that are Computer-Aided, not Automated [M. Mukund - Session Chair] |

| 14:10 - 14:25 CEST | Short Break - 15 minutes |

| Invited Industrial Talks | |

| 14:25 - 14:55 CEST | ExCITE AI - A Technology Agnostic Approach to Safety Critical AI Analysis by Sevak Avakians [Ramesh - Session Chair] |

| 14:55 - 15:25 CEST | Build and Deploy Trustworthy AI by Tsankov Petar [Ramesh - Session Chair] |

| 15:25 - 16:15 CEST | Long Break - 50 minutes |

| 16:15 - 17:15 CEST | Keynote Wendell Wallach: Toward Trustworthy Autonomous Decision-Making: Design, Testing, Compliance and Governance [Ilya Parker - Session Chair] |

| 17:15 - 17:25 CEST | Short Break - 10 minutes |

| 17:25 - 18:15 CEST | UnPlenary: TADM Participants [PC Members - Session Chairs] |

| 18:15 - 18:20 CEST | Closing Remarks Ramesh Bharadwaj |

Post-workshop proceedings

Members of the Program Committee will identify attendees whose active participation in workshop discussions will entail invitations to submit an extended abstract or a full paper for consideration in the workshop proceedings. According to current plans, submission deadline for extended abstracts will be no later than one month after the workshop, and for full papers no later than three months after the workshop. Watch this space for announcements.

The board has approved the publication of the TADM 2021 proceedings in Electronic Proceedings in Theoretical Computer Science (EPTCS).

Accepted papers will appear in a special issue of EPTCS. We plan to have the proceedings available no later than six months after the workshop.

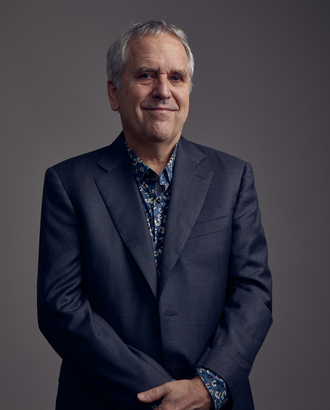

Michael I. Jordan

Michael I. Jordan is the Pehong Chen Distinguished Professor in the Department of Electrical Engineering and Computer Science and the Department of Statistics at the University of California, Berkeley. He is the recent winner of The Ulf Grenander Prize in Stochastic Theory and Modeling for foundational contributions to machine learning (ML), especially unsupervised learning, probabilistic computation, and core theory for balancing statistical fidelity with computation. His research interests bridge the computational, statistical, cognitive and biological sciences, and have focused in recent years on Bayesian nonparametric analysis, probabilistic graphical models, spectral methods, kernel machines and applications to problems in distributed computing systems, natural language processing, signal processing and statistical genetics. Prof. Jordan is a member of the National Academy of Sciences, a member of the National Academy of Engineering and a member of the American Academy of Arts and Sciences. He is a Fellow of the American Association for the Advancement of Science. He has been named a Neyman Lecturer and a Medallion Lecturer by the Institute of Mathematical Statistics. He received the IJCAI Research Excellence Award in 2016, the David E. Rumelhart Prize in 2015 and the ACM/AAAI Allen Newell Award in 2009. He is a Fellow of the AAAI, ACM, ASA, CSS, IEEE, IMS, ISBA and SIAM.

Cynthia Rudin

Cynthia Rudin is a professor of computer science, electrical and computer engineering, and statistical science at Duke University, and directs the Prediction Analysis Lab, whose main focus is in interpretable machine learning. She is also an associate director of the Statistical and Applied Mathematical Sciences Institute (SAMSI). Previously, Prof. Rudin held positions at MIT, Columbia, and NYU. She holds an undergraduate degree from the University at Buffalo, and a PhD from Princeton University. She is a three-time winner of the INFORMS Innovative Applications in Analytics Award, was named as one of the "Top 40 Under 40" by Poets and Quants in 2015, and was named by Businessinsider.com as one of the 12 most impressive professors at MIT in 2015. She is a fellow of the American Statistical Association and a fellow of the Institute of Mathematical Statistics.

Some of her (collaborative) projects are: (1) she has developed practical code for optimal decision trees and sparse scoring systems, used for creating models for high stakes decisions. Some of these models are used to manage treatment and monitoring for patients in intensive care units of hospitals. (2) She led the first major effort to maintain a power distribution network with machine learning (in NYC). (3) She developed algorithms for crime series detection, which allow police detectives to find patterns of housebreaks. Her code was developed with detectives in Cambridge MA, and later adopted by the NYPD. (4) She solved several well-known previously open theoretical problems about the convergence of AdaBoost and related boosting methods. (5) She is a co-lead of the Almost-Matching-Exactly lab, which develops matching methods for use in interpretable causal inference.

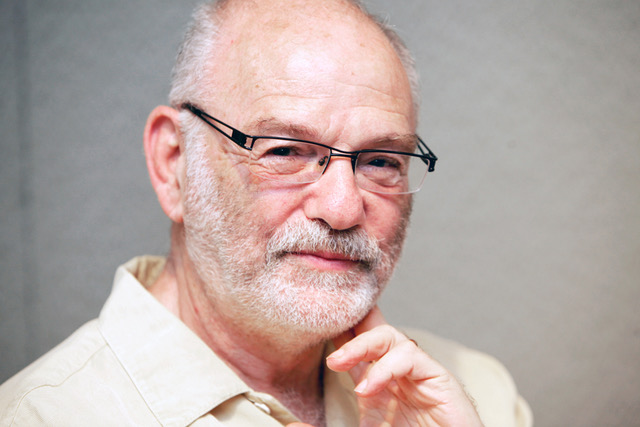

Wendell Wallach

Wendell Wallach is a scholar at Yale University's Interdisciplinary Center for Bioethics, where he chaired Technology and Ethics studies for eleven years. He is also a senior fellow at the Carnegie Council for Ethics in International Affairs(CCEIA), and a senior advisor to The Hastings Center. At CCEIA he co-directs the AI and Equality Initiative. Wallach’s latest book, a primer on emerging technologies, is entitled, A Dangerous Master: How to keep technology from slipping beyond our control. In addition, he co-authored (with Colin Allen) Moral Machines: Teaching Robots Right From Wrong. The eight volume Library of Essays on the Ethics of Emerging Technologies (edited by Wallach) was published by Routledge in Winter 2017. He received the World Technology Award for Ethics in 2014 and for Journalism and Media in 2015, as well as a Fulbright Research Chair at the University of Ottawa in 2015-2016. The World Economic Forum appointed Mr. Wallach co-chair of its Global Future Council on Technology, Values, and Policy for the 2016-2018 term, and he is presently a member of their AI Council. Wendell is the lead organizer for the 1st International Congress for the Governance of AI (ICGAI).

Interdisciplinary Panel

“Interdisciplinary thinking is rapidly becoming an integral feature of research as a result of four powerful “drivers”: the inherent complexity of nature and society, the desire to explore problems and questions that are not confined to a single discipline, the need to solve societal problems, and the power of new technologies.”

From Facilitating Interdisciplinary Research, published by The National Academies Press.

The nature of intelligence is so dynamic and complex, no single discipline could encompass the entirety of understanding its true and fundamental nature. From famous examples like Fei-Fei Li and Geoffrey Hinton, key innovations in AI have been inspired by biology. Other disciplines are also looked to for their potential promise though many interdisciplinary attempts have been less successful, such as the application of reward functions as conceived by economists for predicting human behavior. This panel explores how to maximize productive interdisciplinary approaches. We will focus primarily on what disciplines and practices lend the greatest strength to the trajectory of developing trustworthy automated decision making.

Invited Talks

Andrew Silva

I am a PhD student in the CORE Robotics lab at Georgia Tech under the advisement of Dr. Matthew Gombolay. My research is focused on advancing interactivity and interpretability in machine learning. I’m interested in building intelligent agents that can quickly adapt to new information and user preferences while being able to clearly communicate their decision-making criteria to their human counterparts.

Kathleen Creel

Kathleen Creel is the Embedded EthiCS Fellow at Stanford University based in the Center for Ethics in Society and the Institute for Human-Centered Artificial Intelligence. Her research explores the moral, political, and epistemic implications of machine learning as it is used in automated decision making and in science. Before her PhD in History & Philosophy of Science from the University of Pittsburgh, Kathleen was a software engineer at MIT Lincoln Laboratory.

Dr. Petar Tsankov

Petar holds a Ph.D. from ETH Zurich and is the CEO & Co-founder of LatticeFlow, a spin-off founded by AI researchers and professors from ETH Zurich with the mission to enable companies to deliver trustworthy AI models. Before LatticeFlow, he co-founded ChainSecurity, an ETH spin-off that was acquired by PwC. He graduated from Georgia Tech with two research awards and was awarded by the President of Bulgaria for his achievements in computer science.

Sevak Avakians

Sevak Avakians is the Founder and CTO of Intelligent Artifacts, a company that provides AI solutions for mission and safety critical applications. Mr. Avakians is a published physicist from Brookhaven National Lab under a grant from NASA and has worked as an information theorist, artificial intelligence researcher, cybersecurity analyst for the Federal Reserve and US Treasury, and software developer with proven success in enabling companies with breakthrough advances in machine learning, decision science, and analytics. Mr. Avakians’ work on sensor fusion, information processing, and automated decisions in robotics and network cybersecurity lead to the development of the GAIuS™ framework on which all Intelligent Artifacts’ solutions are built.

Accepted Papers

Vivek Nallur

Vivek Nallur is an Assistant Professor at University College Dublin, Ireland. He is very interested in autonomous and adaptive machines, specifically how to predict emergent properties and system behaviour, when complex parts interact. To investigate these, he uses Multi-Agent systems, simulations, economic mechanisms, machine-learning, and any other tool he can utilize.

Peter McBurney

Peter McBurney is Professor of Computer Science in the Department of Informatics at King's College London. His primary research focus is on automated decision systems, including the design of agent communications languages, and applications of AI in financial and legal domains. He is the Co-Editor of The Knowledge Engineering Review, published by Cambridge University Press.

Dylan Cope

Dylan Cope is a Computer Science PhD candidate in the UKRI Centre for Doctoral Training in Safe and Trusted AI at King’s College London. His research is focused on explainee-centric explanation in the context of multi-agent communication.

Workshop Organizers

Ramesh Bharadwaj, NRL

From 2002-2007, Ramesh Bharadwaj ran a workshop series on Automated Verification of Infinite-State Systems (AVIS) at ETAPS, which was well received and made significant contributions to this area. The stakes now are higher and the field has moved further necessitating the involvement of transdisciplinary researchers and practitioners to work in the socially necessary problem domain of trusted automated decision-making.

Ilya Parker, 3D Rationality

Ilya Parker will moderate a panel on transdisciplinary collaborative research on trustworthiness.

Program Committee:

Luís Alexandre

Baptiste Le Fevre

Raj Dasgupta

James Miller

Madhavan Mukund

Claire Pagetti

Martin Vechev

Get in touch

Please feel free to reach out to Ramesh Bharadwaj with any questions you may have.